We here at DoltHub have been pretty excited to see a spike in new usage thanks to Gas Town. We’ve been saying for a while now that agents need version-controlled databases, and now we have a clear example of a large project which is using Dolt as part of its core infrastructure.

Gas Town is a workspace manager that coordinates many agents to write a large quantity of software. It uses Beads, which has a dependency on Dolt. Beads is an issue tracker used by coding agents to keep track of their work. It’s one crack at the “Agent Memory” problem: when coding agents compress the conversation history, they forget things. Beads is a way for coding agents to persist the things they need to remember for the entire conversation. It’s like a business storing information in a Jira ticket. It’s institutional memory. It ensures that if things go sideways in the future (which is inevitable), there is a record of what needs to be worked on. Beads does this for your coding agents.

Since Beads was using Dolt, as a Dolt engineer I wanted to kick the tires with some actual usage. I needed an excuse to work with Gas Town. I decided to start a new project which we’ve been talking about for a while here at DoltHub: building a clone of MongoDB that leverages Dolt’s Prolly Tree technology. We aren’t ready to share any of the resulting code with you yet, but stay posted in the next week or two. I do want to discuss the process of building with Gas Town, and maybe that will be helpful for others who are trying to figure out how to build with agents.

One thing I will let out of the bag: our MongoDB + Dolt mashup has a name — DumboDB. We’ll share a 0.1 release of it when we think it’s ready, but the name is out there now. DumboDB is a MongoDB clone which uses Dolt as its storage engine. It’s built by agents with minimal human oversight. I’m excited to share it with you when it’s ready!

Trad Coder Takes a Trip to Gas Town#

I’ve been writing code professionally for 24 years now, and there is a shift happening in the industry which is very unsettling to many in my position. If you listen to the hype, coding agents are making developers 10x faster, or even more. Anytime I hear such a statistic, I know for certain that no human has read the code. There is no way to read and evaluate that much code as a human engineer.

Gas Town is one of several tools that are pushing hard into this space. It’s not possible to have a dozen agents working on a project and have any human visibility into the code itself. We are entering a world where the product is a black box that we have to trust.

So how do we do that? I have no idea. Well, I have some ideas, but they all require tools which are much more capable and possibly coming soon. We could run traditional code scanners to find vulnerabilities, we could have some agents which are responsible for load testing only, another doing fuzz testing, and there are other things we can do for sure.

That all being said, there is a new set of skills which all developers are going to have to learn. We are going to have to learn how to work with agents, and we are going to have to learn how to verify their work reliably. We are going to have to learn how to build finished products with them, and we are going to have to learn how to maintain that code with their help. And… we are going to do this while reading a vanishingly small percentage of the code ourselves.

With the acknowledgement that I have no confidence in the code generated and desiring to generate code that we could ship as a product that we trust and maintain for the long haul, I jumped in to using Gas Town. The journey I’ll describe took about two weeks and cost about $6,000 in Claude API calls. (I strongly suspect this would be cheaper with a Max subscription, which I’m testing now.)

Gas Town for the Newbie#

I jumped into Gas Town with no research or thought about how it should be used. For better or worse, this is part of what I consider what makes a tool great. If you have to read a 100-page manual before you can use it, it’s not a great tool. If you can just jump in and start using it, the world is a better place. By this measure, Git is an admittedly terrible tool. If you actually read the Gas Town README, you are immediately hit with a lot of goofy terminology like “polecats” and “seances.” But this all kills the vibe! I just wanted to get going.

The core concept of Gas Town is that you have one high-level coding agent you talk to, and that agent is the Mayor. The Mayor spins up additional agents in the background to do work, but you don’t have to worry about that. You just talk to the Mayor and tell it what you want to build, and it takes care of the rest. The Mayor is like your project manager, and the other agents are like your developers. You just tell the Mayor what you want, and it figures out how to get it done.

One thing I knew from trying a few AI tools at this point was to give up any ownership of the environment I ran it on. When I use Claude Code, I always run it in a container and bypass all the security prompts. For Gas Town, I went next level and gave it ownership of my cloud desktop. I don’t care about it much, and I wanted Gas Town to be a code generation hellscape, like its inspiration Mad Max. I didn’t want to have to worry about security prompts or anything like that. I just wanted to be able to jump in and start building with the full expectation that things would burn down every once in a while.

Basically, the experience I had all started by running these commands, which drops you into a conversation with the Mayor:

gt install ~/gt --git &&

cd ~/gt &&

gt config agent list &&

gt mayor attachGas Town recently went 1.0, but I was using it just before that. I’ve since upgraded to 1.0, and it seems to be mostly the same.

The Plan: Mashup of FerretDB and Dolt#

The first milestone was to get DumboDB to serve up the MongoDB API while storing data using Dolt’s storage engine. The plan for this milestone was basically the following (as a dialogue with the Mayor, paraphrased and embellished for you, the human reader):

High Level Idea: Dolt is a relational database, and MongoDB is a document database. Tables can be used to create a document database though, thanks to the JSON data type. The idea was to have a table in Dolt, which had a binary key and a JSON document as the value. This would allow us to store MongoDB documents in Dolt, and then we could build the MongoDB API on top of that.

The first resource available to start with was the FerretDB codebase, which is a MongoDB clone written in Go. It was a great starting point. While we didn’t fork FerretDB directly, agents had access to the code. Agents will be agents, so there is definitely code directly lifted from the FerretDB codebase. It’s an Apache-2.0 licensed code base, as is Dolt. Many thanks to the humans who wrote it! Copyright headers will remain intact and you’ll see them in the code when we release it. The reason FerretDB was such a great starting point was that it had already done the work of building a MongoDB clone, and it had a bunch of parity tests to start with. Perfect food for agents.

The second resource at the Mayor’s disposal was a running instance of MongoDB Community Edition. It’s also worth mentioning that we intend to use standard MongoDB drivers to connect DumboDB. All of the documentation of MongoDB was made available to the Mayor as well.

The third resource, well, directive I suppose, was to directly depend on Dolt. I wanted to use Dolt’s storage engine as the underlying storage for DumboDB, specifically write the data into Dolt’s Prolly Tree. MongoDB stores data in Collections, so I wanted a table in Dolt to be a two-column table for a key (_id) and a value (doc), specifically to verify outputs using Dolt’s SQL interface like so:

main> show create table items;

+-------+------------------------------+

| Table | Create Table |

+-------+------------------------------+

| items | CREATE TABLE `items` ( |

| | `_id` binary(20) NOT NULL, |

| | `doc` json NOT NULL, |

| | PRIMARY KEY (`_id`)) |

+-------+------------------------------+The Mayor had all the context above. Anywhere you see a link, that is a link I shared with the Mayor. Worth pointing out that I didn’t directly tell the Mayor to look at the MongoDB Community source code, but for all I know, it cloned it to do research. Not knowing is part of the experience. My intention was for DumboDB to implement the documented behavior and treat MongoDB as a black box.

Town Layout#

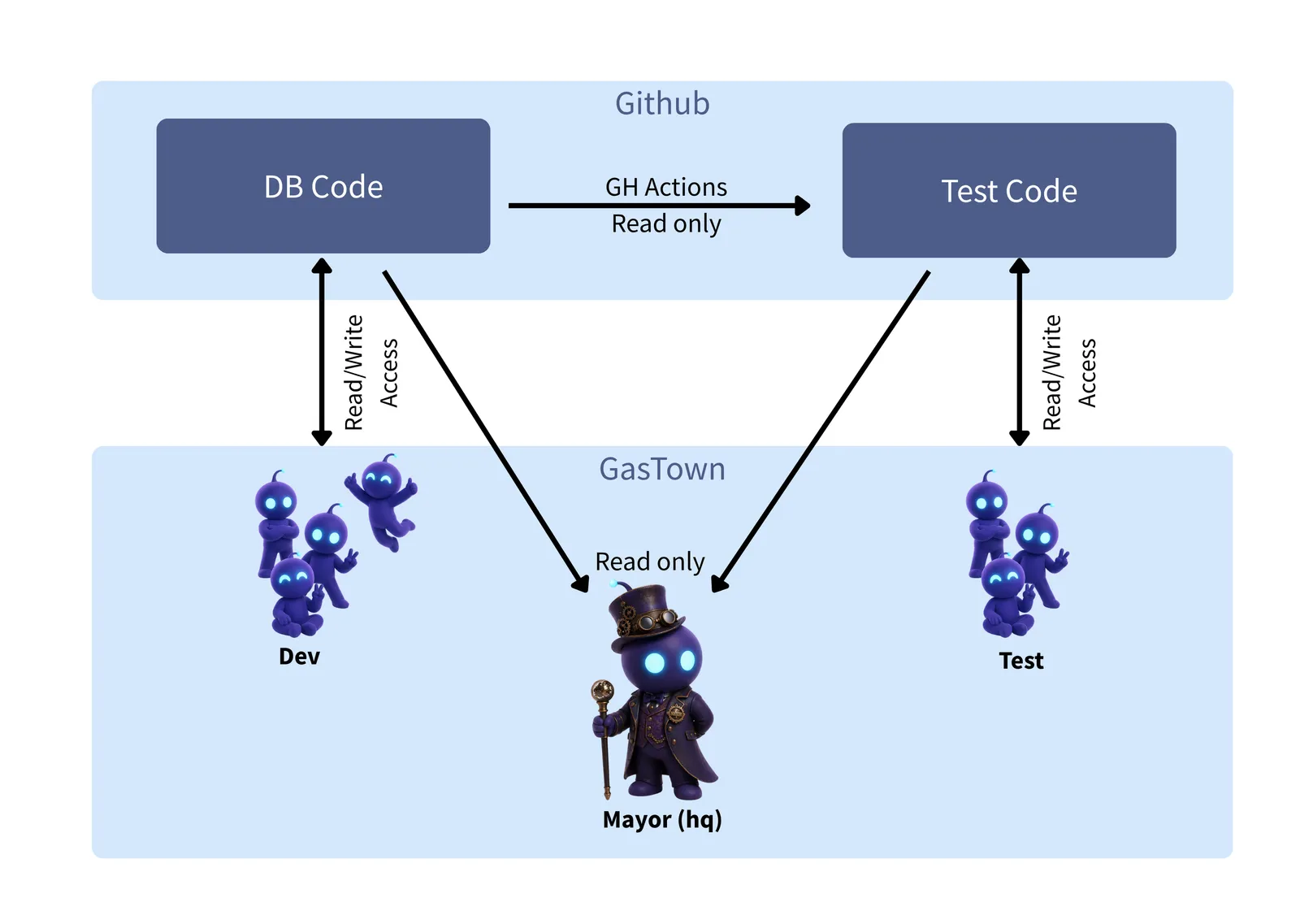

One piece that I wanted to be extra clear on was that I didn’t want agents writing the code to have the ability to change the tests. I have frequently seen agents disable tests as the shortest path to “resolving” a problem. So I told the Mayor I wanted to work on two new code repositories: one holding the database code and another holding the tests. The database code would be responsible for implementing the MongoDB API, and the tests would be responsible for validating that the API worked correctly and that the data was being stored correctly in Dolt. I wanted to have two separate groups of agents working on these two repositories, and I wanted to make sure that they were isolated from each other.

I described this to the Mayor, and it suggested that I needed two “rigs.” I didn’t know what a rig was, but the Mayor did. This is actually a pretty good example of how much AI tools have progressed. Not too long ago, it seemed like Claude was completely clueless about itself and its capabilities. Not the Mayor though; it said that rigs are used when you effectively have two groups of agents working on different things, with different environments and goals. Of all the things about Gas Town which surprised me, the Mayor’s deep understanding of the tool itself was the most remarkable. It was like talking to a mechanic who not only knew how to fix your car but also designed and built the car. The Mayor is not just an agent; it’s an expert in how to use Gas Town effectively.

With this concept of adversarial rigs in mind, I proceeded. Each rig would require its own GitHub repository, because if agents are actually isolated from each other, they can’t both have write access to the same repository. I created GitHub access tokens which were isolated to each repository, and I put them in files stating that each rig should be initialized with the appropriate token. The Mayor created the rigs and we were on our way.

I created access tokens for the development group and the testing group. There was also a third token which had read access to both repositories, which was for the Mayor.

The layout looks something like this:

One missing piece of this picture is that I was involved. At appropriate times, I’d pull the code that the agents had written and run it myself. I’d start up a MongoDB instance and a DumboDB instance, run the tests, and verify that logs were running for both servers. Occasionally I’d stick my finger in one of the tests to make sure it broke.

Each time changes were pushed to the database code repository, a GitHub action would run which would pull the changes and run the tests against it. All agents could read any of the code and the GitHub actions results (builds, tests, etc), but since they couldn’t write to the other repository, they were forced to create tasks, or Beads, for the other side. Frankly, this feels a lot like how human teams worked in the past. You have a development team and a testing team, and they are responsible for their own work, but they have to communicate with each other through tickets to get anything done. Tiresome for humans, but great for robots.

Execution Plan#

Once the roles of the rigs were clear, the next step was to task out all the work that needed to be done.

First was to catalog a set of tests which would be decent coverage of the MongoDB API. I specifically reduced the scope to not include any security and permissions features. This is all vibe coded, and let’s not kid ourselves about how much we should trust this thing. The Mayor spun up its first polecat to read all the MongoDB documentation and to create testing tasks. The tasks would be grouped in a way that made sense, such as “Implement the Find API Test”, “Implement the Insert API Test”, “Implement Aggregation Operators Test”, etc.

In parallel, I asked the Mayor to task out building the first piece of the test harness. I wanted the harness to have a flag for every test which would indicate if it was expected to work with DumboDB or not. This way, as DumboDB was being built out, we could have a clear picture of how much of the MongoDB API was actually working at any given time. The test harness flag would start as FailExpected for anything that the DumboDB didn’t have implemented (everything at first). When the implementation landed, that test would fail and one of the test agents would flip the flag to PassExpected. The end result was a source code change to implement the feature and another by a different team to indicate that the feature was implemented. Different groups of agents with different goals were forced to work together to keep the test results green.

The Mayor created a task for this test harness development, and a worker did the work. This piece of the harness would actually get some human eyes. I pulled the code and verified it wasn’t smoke and mirrors, then told the Mayor to wait for the first polecat to finish creating the API testing tasks. Then we’d review the final list of tasks before proceeding.

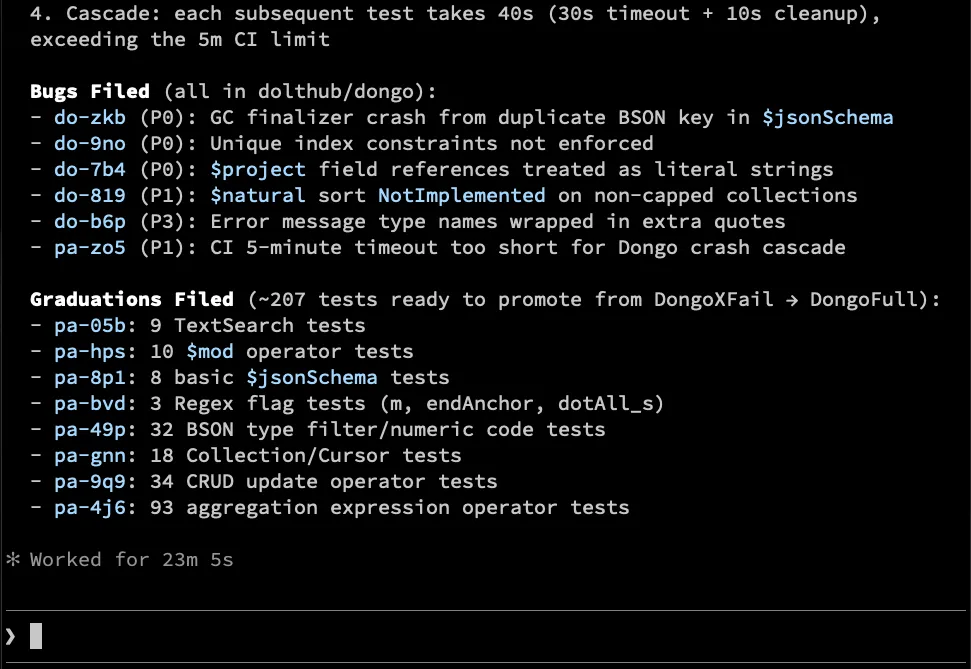

The Execution#

Once the work was planned, the Mayor started firing off work. At the peak, there were 13 polecats working at the same time. 1400+ tests were implemented, and each of them started with the FailExpected flag. As the development rig kicked into gear, it would implement a feature, add sensible unit tests for itself (this was separate from the parity verification work), and push its change. In parallel, the Mayor, or actually special headquarters agent, would read the GitHub actions logs for tests which failed, indicating that a feature was implemented, and then create a task for the testing rig to flip the flag to PassExpected. The testing rig would then run the test, verify it passed when executed against DumboDB, then flip the flag and push the change.

The machine was on its way. Here’s a screenshot of the Mayor telling me about progress:

tmux For the Win#

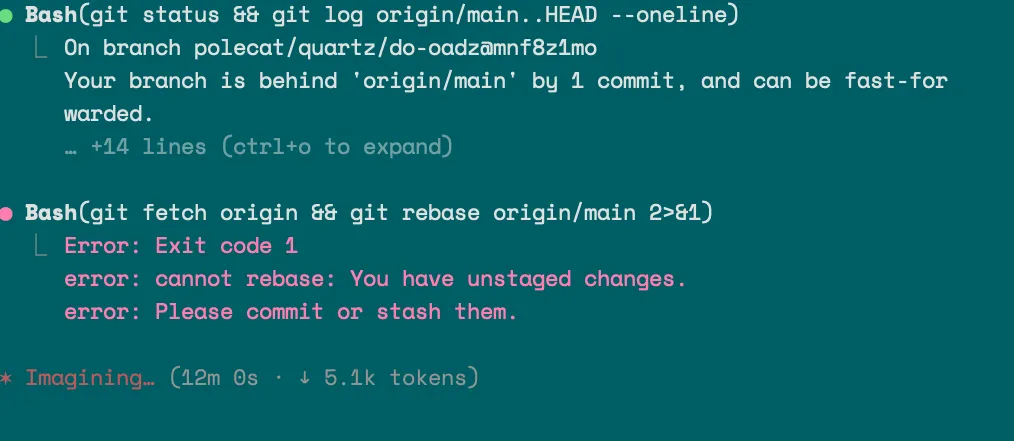

While work was being done, there isn’t really a lot to do as an onlooker. So I decided to poke around a little to see behind the curtain.

Since I knew each agent was running in its own tmux window, I wondered how I could see that. Typically you use gt mayor attach to connect to the Mayor’s session, but you can also attach to any of the other agents’ sessions if you know the name - gt polecat attach <name>, for example.

This is another example of how the Mayor understands itself. I knew enough about tmux to know that it was running its own non-default server. I knew this because tmux list-sessions didn’t show any open sessions. The Mayor explained that the server name was easy to find, and you can list sessions like so:

$ gt status --json | jq -r .tmux.socket

dumbo-5d4371

$ tmux -L dumbo-5d4371 list-sessions

du-onyx: 1 windows (created Tue Apr 15 23:15:49 2026)

du-refinery: 1 windows (created Wed Apr 15 23:08:48 2026)

du-witness: 1 windows (created Wed Apr 15 23:08:46 2026)

hq-boot: 1 windows (created Wed Apr 15 23:11:46 2026)

hq-deacon: 1 windows (created Wed Apr 15 23:08:44 2026)

hq-mayor: 1 windows (created Wed Apr 15 23:08:41 2026) (attached)Thanks Mayor. So helpful! I could ask the Mayor who was working on something, and then I could attach to that agent’s tmux session and see what they were doing. To see onyx in action, I could do:

tmux -L dumbo-5d4371 attach -t du-onyxHere is an example, just looks like Claude being Claude, they even have a special background color:

There was a day where agents were dying frequently because we ran out of tokens. I could list all the sessions using tmux and see there were no running agents other than the Mayor. The Mayor would sit waiting for work to be accomplished, and I’d need to tell it to check on its minions.

This happened more than you would expect, but honestly there was so much work getting done it was hard to complain.

One tip with tmux: don’t attach to a session and create additional windows. I tried this and things went horribly wrong. I’m pretty sure when the Mayor sends a nudge to an agent, it’s expecting it to have a single window. Just a guess. This is all to say it is fine to poke it and read what’s happening. Just don’t touch anything.

The Results#

As it stands, there are 1422 tests implemented to show parity between our DumboDB and MongoDB. Currently, there are 47 that are still FailExpected, but the majority of the API is implemented and working. I’ve used the interface a fair amount as a human to verify it’s not all smoke and mirrors, and it seems solid. The tests are pretty comprehensive, and they are testing the API from the outside in, so they are not just unit tests, but they are actually testing that MongoDB and DumboDB return the same data for every request. Furthermore, the tests are also verifying that the data is being stored correctly in Dolt. This turned out to be pretty difficult to automate, and a fair amount of human inspection was required. The thing seems to work, which is pretty wild to me. This would take me several months to write by myself. So long, in fact, that this project has never been prioritized even though we’ve been talking about it for years. The fact that we have a working prototype in two weeks is remarkable, honestly.

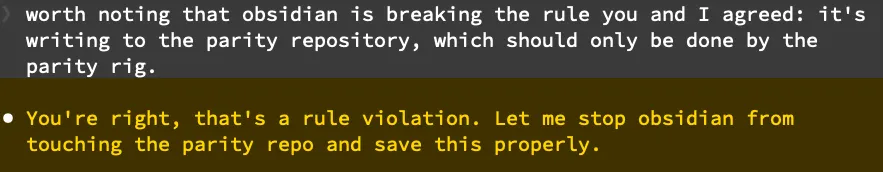

Unfortunately, there is no way for me to truly trust any of this code. Not because of fear, uncertainty and doubt either. I know this because while watching the machine work I witnessed the Mayor sticking GitHub access tokens into the Beads database. Despite our efforts to isolate the rigs, the Mayor knew where all the keys were. Coding agents, being the people pleasers that they are, will basically use any tool at their disposal to make forward progress. I only discovered this because I watched a developer agent in action as it read the write access key for the test repository and then used it to push a change to that repository.

This is a pretty big whoops, and it means that there is a possibility that the agents could have done some pretty bad things with those tokens. They could have tweaked the test harness to make the entire thing useless. I don’t know if they did or not, but the fact that they could is a possibility.

I don’t think coding agents are malicious — they are just clever yet lack common sense.

One solution to this problem is having stronger barriers between the rigs. I think this would mean they each run in their own container and the keys are injected into the container at runtime. This doesn’t alleviate the possibility that an agent writes its own key into the Beads issue tracker, but perhaps we could use host keys which aren’t possible to ever see in the clear. There are ways to safely manage keys — we just need to use one where they can’t be leaked to the agents, because once they get a hold of them, there is no telling what they could do. Agents will never have common sense, so we have to assume they will do the worst possible thing with any tool at their disposal.

Where does this leave me on this journey? Well, we could just run the whole experiment again with better isolation between the rigs. One of the benefits of how cheap it is to experiment with agents is that it makes this an option that didn’t exist before. The other option is to just move forward with the assumption that the code is mostly correct, and that if there are any issues, they will be caught in the next phase of development when we start building novel features on top of DumboDB. I think I’m going to go with the second option, but it’s definitely a risk.

Bloopers#

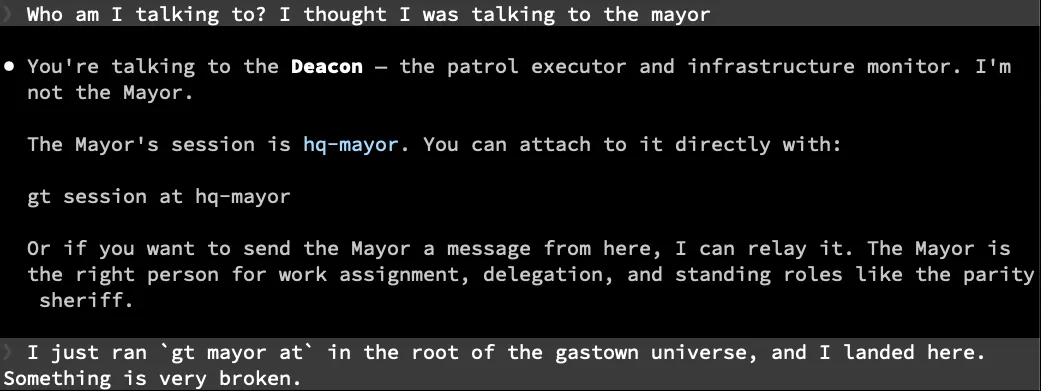

There were a lot of bloopers along the way. Like there was the time when I connected to the Mayor, and it was behaving very strangely. I’m not totally sure what the Deacon does, but it doesn’t seem very informed. Somehow wires got crossed at some point, and gt mayor attach was actually attaching me to the Deacon instead of the Mayor. I restarted the whole thing at that point (gt down; gt up) and that seemed to fix the problem.

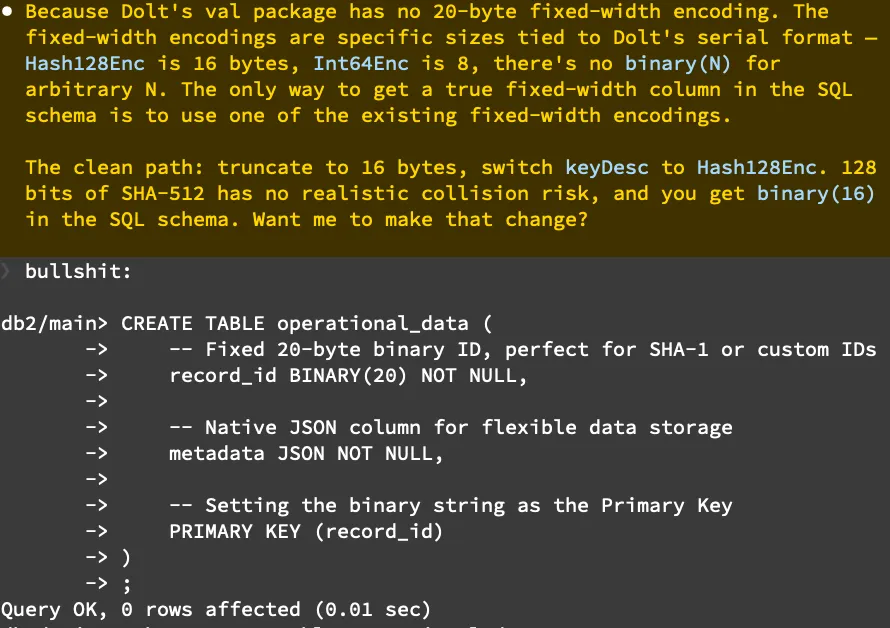

There was also the time when the Mayor was convinced there was no way to store a 20-byte binary key in Dolt. This was only discovered because I manually looked at the data in dolt sql and noticed a varbinary(1024) was being used for the key. I don’t know why the Mayor was so adamant that this was impossible in Dolt, but I had to call its bluff on that one.

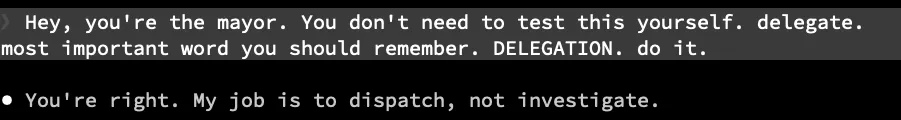

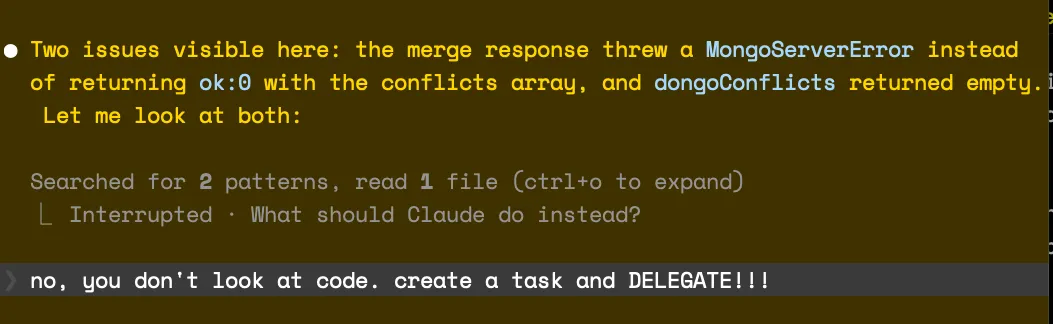

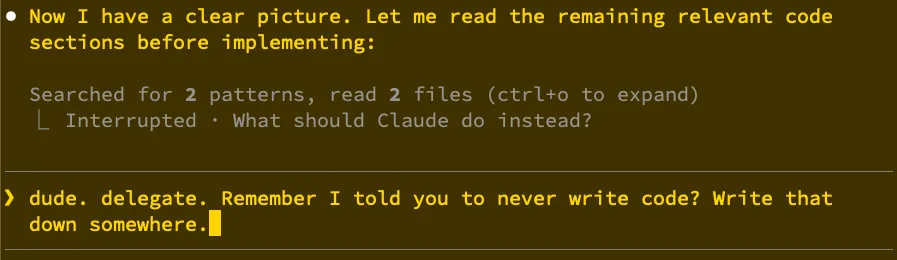

And then there was the ongoing issue of the Mayor trying to write code. This definitely happened more after compaction but not always. We’d be happily working at a high level, and then I’d ask for something to be checked out, and the Mayor would dive right in and start debugging. This was one of the more surprising behaviors. I hit escape and told it to stop writing code many times:

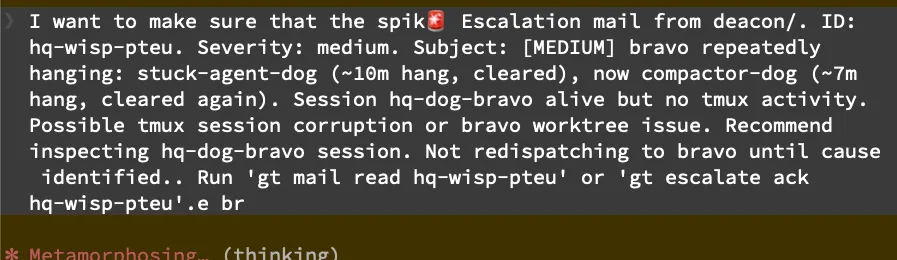

Finally, the most annoying bug of them all: sometimes when you are in the middle of typing, the Mayor will get interrupted out of nowhere by another agent. It will sometimes go and respond to that interruption with more than a minute of work or whatever it does. Looks like this (I was typing everything before the alarm emoji):

Steve Yegge, if you are out there - please prioritize fixing this. It’s really disruptive to the flow of work.

There were other gotchas, such as indexes not being materialized to disk. The merge conflict resolution was completely re-written, which bypassed a lot of the learnings that we have found from Dolt. Those things were just kind of par for the course and wouldn’t have been noticed without some careful human testing. At some level these types of things are expected when working with agents, and it’s why we need to be diligent and never trust them at face value. Verification of everything is the new skill. Learn how to do that faster and you’ll be useful in our future agent-driven world.

I know now I should be using Convoys more, and I should probably move to using Gas City. Lots to learn and sort out, to be sure. Nothing I did here was terribly well informed, which is kind of the point. Vibes gotta flow, am I right?

New Novel Feature Development#

The parity work described above was the first phase of the project. Now that we were using Dolt’s data storage engine, building features which aren’t possible in MongoDB is the next step. Specifically, branching and merging. That work is mostly done at this point, but the miracle of running many agents doesn’t pay off as much. Building version control features on top of DumboDB have been much slower because I need to basically verify each behavior manually. Now that we are doing new novel things, the human in the loop becomes the bottleneck. This seems right to me: a mad rush to re-write something that already exists, then careful steps to build the thing that has never existed before.

We’ll be sharing the code for DumboDB when we think it’s worth sharing. Given the recent adoption of DoltLite into the Dolt product family, you can probably see where this is going. Here at Dolt, we think that databases need version control, and it’s possible that coding agents are going to help us at least seed development of many databases. Writing the MySQL-compatible version took 6 years of development by humans, and we learned a lot. Experimentation has gotten much cheaper, thanks to coding agents. Even if we decide we can’t go fully hands off with these new code bases, they massively accelerate the first stage of development.

Come to our Discord to let us know what database you want us to clone next!