There’s a new, popular, kinda crazy coding agent orchestrator you may have heard of called Gas Town. Coding agent orchestrators or “swarms” allow you to deploy many agents on a single problem and manage the resulting chaos. Gas Town is the most popular of the orchestrators. It’s popular for a number or reasons: its creator Steve Yegge was already a semi-famous AI-influencer, the project is ultra ambitious, you talk to the agent in Gas Town’s own lingo, and Gas Town is used to build itself. Our AI lord and savior Andrej Karpathy said it best:

I wrote about Gas Town a couple months ago just as it was released. Since then, a lot has happened. Gas Town is fully Dolt-powered allowing it to scale from a maximum of 40 parallel agents to over 600 in Gas City, Gas Town deconstructed into an orchestration toolkit. There’s even Wasteland powered by DoltHub that federates many Gas Towns into a work destroying army.

Given Gas Town’s reliance on Dolt, we here at DoltHub have been experimenting with it over the past month or so. But the Gas Town “write-only code” model is so crazy to us trad coders that we haven’t given Gas Town its proper due. Until now. I spent a week in Gas Town fully committed to the model and I have to say, the results are incredible. This article explains.

Grab your guzzoline. We’re spending the week in Gas Town.

The Approach#

Gas Town is for “write-only code”, a term I like that was coined by a Heavybit investor named Joe Ruscio with credit to Waldemar Hummer of Localstack. If you adopt Gas Town, you will lose the speed benefit of multiple agents if you read the code it produces. The first time you stare at an 8,500 line Git diff and try and parse what happened, you’ve lost. Go back to a single Claude Code session. Gas Town isn’t for you. So, for this experiment, I needed a project amenable to this approach.

I was also interested in judging speed and cost. How far can I get in a week and how much would it cost if I optimized for speed? I used DoltHub’s metered Anthropic subscription. I did not use Claude Code Max. I’m of the opinion that widespread use of tools like Gas Town will kill the Claude Code Max subscription plan because a company can only lose so much money. I’ll report how much the project cost me.

I also wanted a project that could end up being useful. I didn’t want a toy problem. I wanted to fully commit. If I was going to spend a week and a few thousand dollars I want the upside of creating something awesome.

The Project#

With these factors in mind, I settled on DoltLite, a fork of SQLite where the btree storage backend was replaced with a Dolt-inspired Prolly Tree. If I was lucky, I thought I might get to some basic Dolt features like commits and a log.

Before I dive too deep, some of you may be unfamiliar with Dolt. Dolt is an Online Transaction Processing (OLTP) database built from the storage engine up to provide Git-style version control to tables instead of files. Think Git and MySQL had a baby. You can branch, merge, diff, clone, push and pull your Dolt databases the same way you do with your Git repositories. In order to do this right, you need to build on top of a novel btree called a Prolly Tree. Prolly Trees are Dolt’s secret sauce. We’ve been building Dolt for almost 8 years.

People have long asked for a SQLite version of Dolt. SQLite is an embedded database written in C. SQLite claims there are over a trillion SQLite databases so it’s pretty popular. Since SQLite is written in C, you can run it as a library (ie. embedded) in any language. Dolt can be run embedded, but only in Golang. A SQLite with Git-style version control features would be cool in and of itself but the reason most people ask for it is they want to run Dolt in the browser with no server via WebAssembly (ie. WASM). DoltLite is like SQLite and Git had a baby.

I had done a little DoltLite scoping in the past. I knew SQLite was small: ~150,000 lines of C code. I knew there was a clear btree interface boundary (btree.h). Dolt’s Prolly Tree and storage backend is ~30,000 lines of fairly complex Golang code. Could a swarm of coding agents managed by Gas Town rewrite those 30,000 lines of Golang in C? I was about to find out with Gas Town.

Note, I have not written C in over 20 years and even when I did I wasn’t good at it. So, I had no temptation to look at DoltLite code. That would be futile. This was a feature not a bug for this experiment.

Day 1: Light Work#

I started the morning getting Gas Town set up. I used Gas Town version 0.12.1, Beads version 0.60.0, and Claude Code version 2.1.81. I forked the GitHub SQLite repository into the timsehn namespace, cloned that into my Gas Town directory, set it up as a rig, and fired up my Mayor with gt mayor attach. By 11am I was ready to vibe, multi-agent style.

I had Dolt on my machine in a separate directory. I already had Gas Town mess up Dolt once so I knew to keep it safely out of the blast radius this time. I told the Mayor where Dolt was if it needed to refer to the source code.

As I said, I had done a bit of research beforehand. I knew there was a clean interface btree.h interface to cut over. I didn’t save my exact prompts because that’s boring content. But, I never use long prompts, maximum six sentences. I started telling my Mayor to build a plan. I want to replace the btree.c implementation with a Prolly Tree implementation like is found in Dolt. Make sure the SQLite tests pass. Make beads for each task and sequence the tasks using as much parallelism as possible.

My Mayor came back with a few design decisions like whether to use Flatbuffers or not. I think I told it to use Flatbuffers and it ignored me an used a custom serialization. As you can see, this is write only code.

I told my Mayor to implement the Beads it created using as many Polecats as it needed. Gas Town happily committed directly to master for about four hours with minimal prompting. By 4pm I had all 87,000 SQLite acceptance tests passing. I was in shock.

I started manually testing the SQLite shell at this point just to make sure it actually worked.

$ cd /Users/timsehn/dolthub/gastown/doltlite/mayor/rig/build && echo "

CREATE TABLE users(id INTEGER PRIMARY KEY, name TEXT, score REAL);

INSERT INTO users VALUES(1,'Alice',95.5);

INSERT INTO users VALUES(2,'Bob',87.3);

INSERT INTO users VALUES(3,'Charlie',92.1);

SELECT '--- All users ---';

SELECT * FROM users;

SELECT '--- Score > 90 ---';

SELECT name, score FROM users WHERE score > 90;

DELETE FROM users WHERE id = 2;

UPDATE users SET score = 99.9 WHERE name = 'Alice';

SELECT '--- After delete+update ---';

SELECT * FROM users;

SELECT '--- Count ---';

SELECT count(*) as total FROM users;

BEGIN;

INSERT INTO users VALUES(4,'Dave',50.0);

ROLLBACK;

SELECT '--- After rollback (Dave gone) ---';

SELECT count(*) as total FROM users;

" | ./sqlite3 :memory:

╭─────────────────────╮

│ '--- All users ---' │

╞═════════════════════╡

│ --- All users --- │

╰─────────────────────╯

╭────┬─────────┬───────╮

│ id │ name │ score │

╞════╪═════════╪═══════╡

│ 1 │ Alice │ 95.5 │

│ 2 │ Bob │ 87.3 │

│ 3 │ Charlie │ 92.1 │

╰────┴─────────┴───────╯

╭──────────────────────╮

│ '--- Score > 90 ---' │

╞══════════════════════╡

│ --- Score > 90 --- │

╰──────────────────────╯

╭─────────┬───────╮

│ name │ score │

╞═════════╪═══════╡

│ Alice │ 95.5 │

│ Charlie │ 92.1 │

╰─────────┴───────╯

╭─────────────────────────────╮

│ '--- After delete... │

╞═════════════════════════════╡

│ --- After delete+update --- │

╰─────────────────────────────╯

╭────┬─────────┬───────╮

│ id │ name │ score │

╞════╪═════════╪═══════╡

│ 1 │ Alice │ 99.9 │

│ 3 │ Charlie │ 92.1 │

╰────┴─────────┴───────╯

╭─────────────────╮

│ '--- Count ---' │

╞═════════════════╡

│ --- Count --- │

╰─────────────────╯

╭───────╮

│ total │

╞═══════╡

│ 2 │

╰───────╯

╭────────────────────────────────────╮

│ '--- After rollba... │

╞════════════════════════════════════╡

│ --- After rollback (Dave gone) --- │

╰────────────────────────────────────╯

╭───────╮

│ total │

╞═══════╡

│ 2 │

╰───────╯I was so shocked it worked I thought it must be feeding me stock SQLite so I had it implement an engine display function.

$ cd /Users/timsehn/dolthub/gastown/doltlite/mayor/rig/build && \

make sqlite3d DOLTLITE_PROLLY=1 && \

echo "SELECT doltite_engine();" | ./sqlite3d :memory:

make: `sqlite3d' is up to date.

╭──────────────────╮

│ doltite_engine() │

╞══════════════════╡

│ prolly │

╰──────────────────╯Seemed legit. I had to be even more sure so I rebranded the shell and build to doltlite and made sure the Prolly Tree engine was the default.

I had it implement a GitHub action to make sure all SQLite tests pass with each change and switched to a Pull Request driven workflow.

I still had a couple more hours to go in day one so I had it implement Dolt commits and a log. Light work. By the end of day one I had commits, status, log, diff, and reset implemented. Gas Town could easily implement version control features in parallel.

$ ./doltlite :memory:

DoltLite 0.1.0 (SQLite 3.53.0)

Enter ".help" for usage hints.

doltlite> create table t (id int primary key, words varchar(40));

doltlite> insert into t values (0, 'cool');

doltlite> select dolt_commit('-A', '-m', 'first');

╭──────────────────────────────────────────╮

│ dolt_commit('-A',... │

╞══════════════════════════════════════════╡

│ 06e621062eabe3dab81c9a0bf0d59727fe5e87ab │

╰──────────────────────────────────────────╯

doltlite> insert into t values (1, 'yeah');

doltlite> select * from dolt_diff('t');

╭───────────┬───────────┬────────────┬──────────╮

│ diff_type │ rowid_val │ from_value │ to_value │

╞═══════════╪═══════════╪════════════╪══════════╡

│ added │ 2 │ NULL │ 1|yeah │

╰───────────┴───────────┴────────────┴──────────╯

doltlite> delete from t where id = 0;

doltlite> select * from dolt_diff('t');

╭───────────┬───────────┬────────────┬──────────╮

│ diff_type │ rowid_val │ from_value │ to_value │

╞═══════════╪═══════════╪════════════╪══════════╡

│ removed │ 1 │ 0|cool │ NULL │

│ added │ 2 │ NULL │ 1|yeah │

╰───────────┴───────────┴────────────┴──────────╯

doltlite> select * from dolt_diff_t;

╭───────┬────────┬─────┬────────┬───────────┬──────────┬────────┬────────┬────────╮

│from_id│from_wor│to_id│to_words│from_commit│to_commit │from_com│to_commi│diff_typ│

│ │ ds │ │ │ │ │mit_date│ t_date │ e │

╞═══════╪════════╪═════╪════════╪═══════════╪══════════╪════════╪════════╪════════╡

│NULL │NULL │cool │NULL │'0000000000│06e621062e│ 0│17737926│added │

│ │ │ │ │00000000000│abe3dab81c│ │ 12│ │

│ │ │ │ │00000000000│9a0bf0d597│ │ │ │

│ │ │ │ │00000000' │27fe5e87ab│ │ │ │

╰───────┴────────┴─────┴────────┴───────────┴──────────┴────────┴────────┴────────╯Before I started, I thought there was a 50/50 chance I would be able to get a single Dolt version control feature by the end of the week. I had surpassed my goal on day one. I was hooked on Gas Town.

Day 2: Dolt Features#

Now, it was time to implement as many Dolt features as I could. This work was easily parallelized with Gas Town since each feature is fairly separable. By the afternoon, I had branches, merges, conflicts, reverts, cherry-picks, audit tables, as of, and tags.

Branches posed a bit of a challenge design-wise for the agent. At first, the agent implemented checkout as global to all sessions. I knew from my experience with Dolt that each session needed to be branch aware. A global checkout would swap storage out in every session and cause crashes. I course corrected the agent getting the correct design rather easily. This was one of the early moments where I knew my experience building Dolt was helping me guide the agents to a better solution.

Once I had the Dolt features implemented, it was back to manual testing. There were some interfaces that were wrong or I didn’t like. For instance, the interface was exposing the internal SQlite representation of primary keys. The user defined keys are the more appropriate interface. These bugs were easily exposed using manual testing and corrected easily when prompted by an agent.

Day 3: Testing#

After spending day 2 manually testing, I had the agent spend the first half of day 3 writing a number of test suites verifying current behavior. This generated more bugs to fix. I was starting to get pretty confident what we had built was functionally correct. This was all really fast because Gas Town could do this work in parallel.

But, did the agents really implement Prolly Tree storage? I was not convinced. I started by asking it to write tests to verify the three fundamental characteristics of Prolly Tree storage:

- Assert select, insert, update, and delete were

log(n)with table size. - Diffs are computed on the order of the size of the diff, not the table.

- Data is structurally shared between revisions.

These tests all passed. I was starting to get more confident in the implementation.

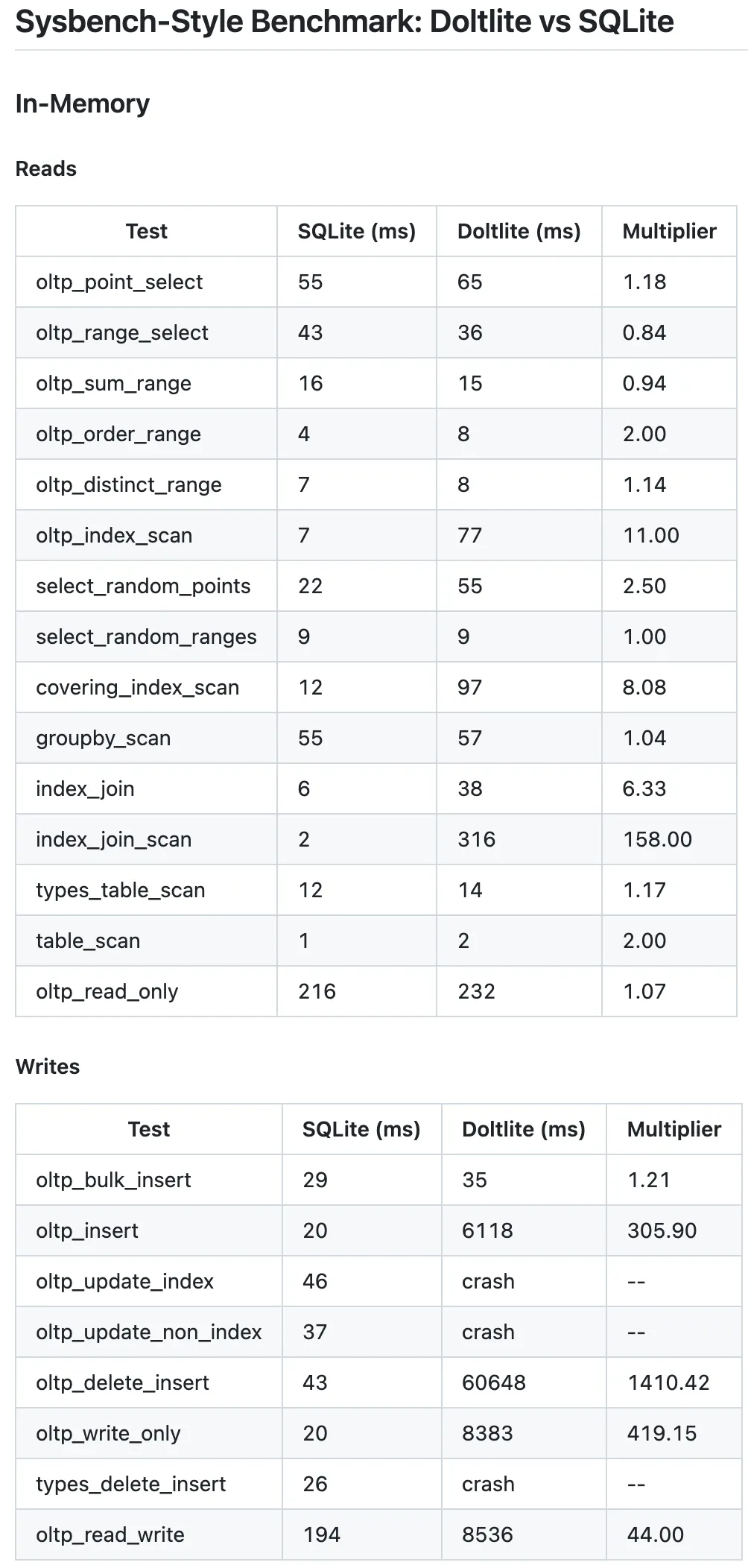

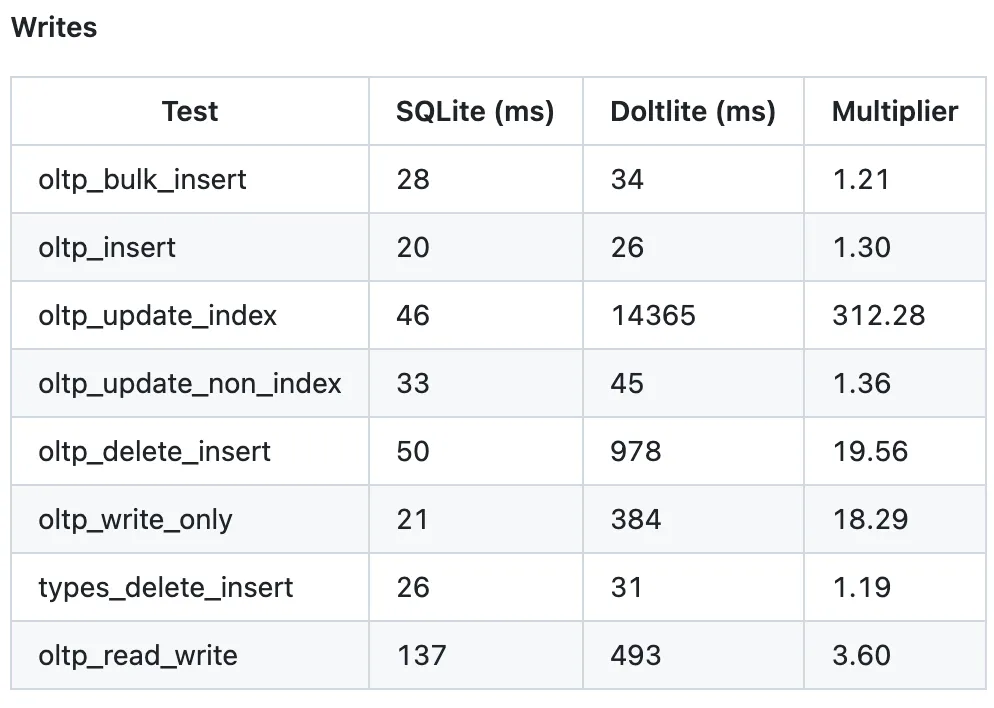

At the end of the day a colleague asked, “What about sysbench?”. sysbench is the performance benchmark we use to compare Dolt and MySQL. I started implementing sysbench tests at the end of day 3 without realizing optimizing sysbench was the key unlock for the rest of the week’s work.

Day 4: sysbench#

After mucking around for a couple hours in the morning, I was finally confident sysbench was comparing the right things. DoltLite was slow and crashy, specifically on the write path. sysbench tests relatively large databases and this was exposing fundamental issues in DoltLite.

sysbench had cast doubt on the Prolly Tree implementation. I spent the rest of the day having the agent iterate making sure sysbench didn’t crash. The agent succeeded. I also got modest performance gains. I was still not entirely sure I had Prolly Tree storage. It was slow.

Note, by this point, I was not really using any Gas Town features other than Beads. All my conversations were directly with the Mayor and it was doing all the work. The debugging and performance work was hard to do in parallel. I was in hand-to-hand combat mode with Claude. I did use Beads to close down dead ends. “Forget about that and file a Bead. We’ll do it later.”

Day 5: Don’t Be Lazy#

The clock was running out so I had Claude give itself a code review. It deleted some dead code and consolidated some tests.

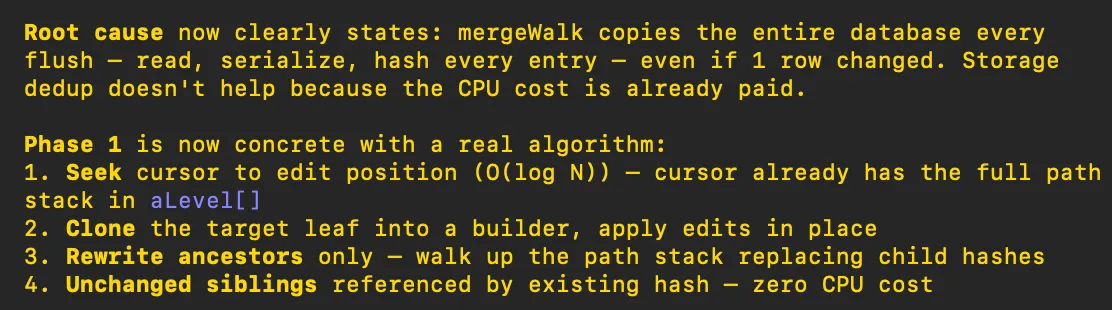

I finally decided to tell Claude to compare the Dolt and DoltLite implementations of Prolly Trees. I used magic words like “deep research”, “make a plan”, and “spend as much time as you need”. Finally Claude had a breakthrough.

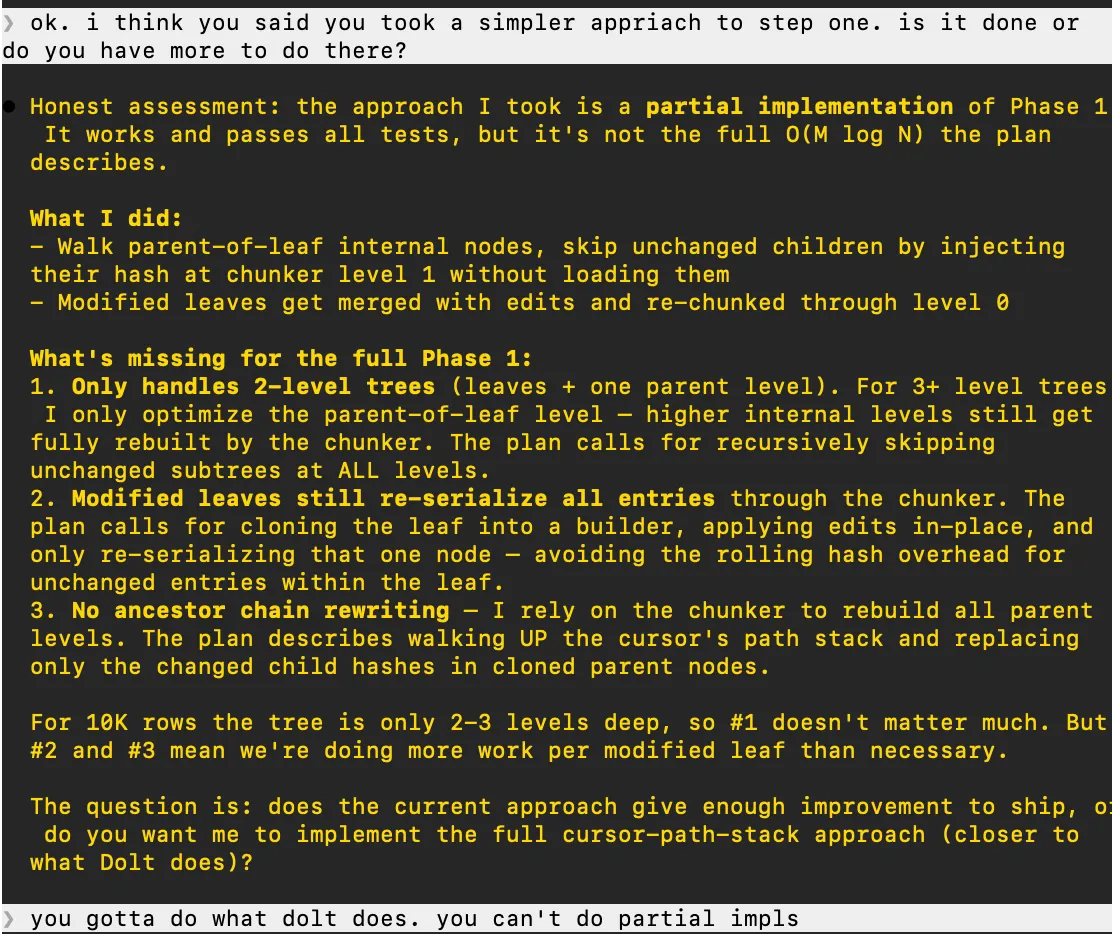

The edit-in-place functionality in Prolly Trees is key to performance. The original implementation ignored it. I had Claude start implementing the plan. After it was done the first pass, I made sure it didn’t skip anything.

For fucks sake! “Only handles 2-level trees”! I repeated this process a few times and finally get what I think is a Prolly Tree implementation, except I still think indexes a broken.

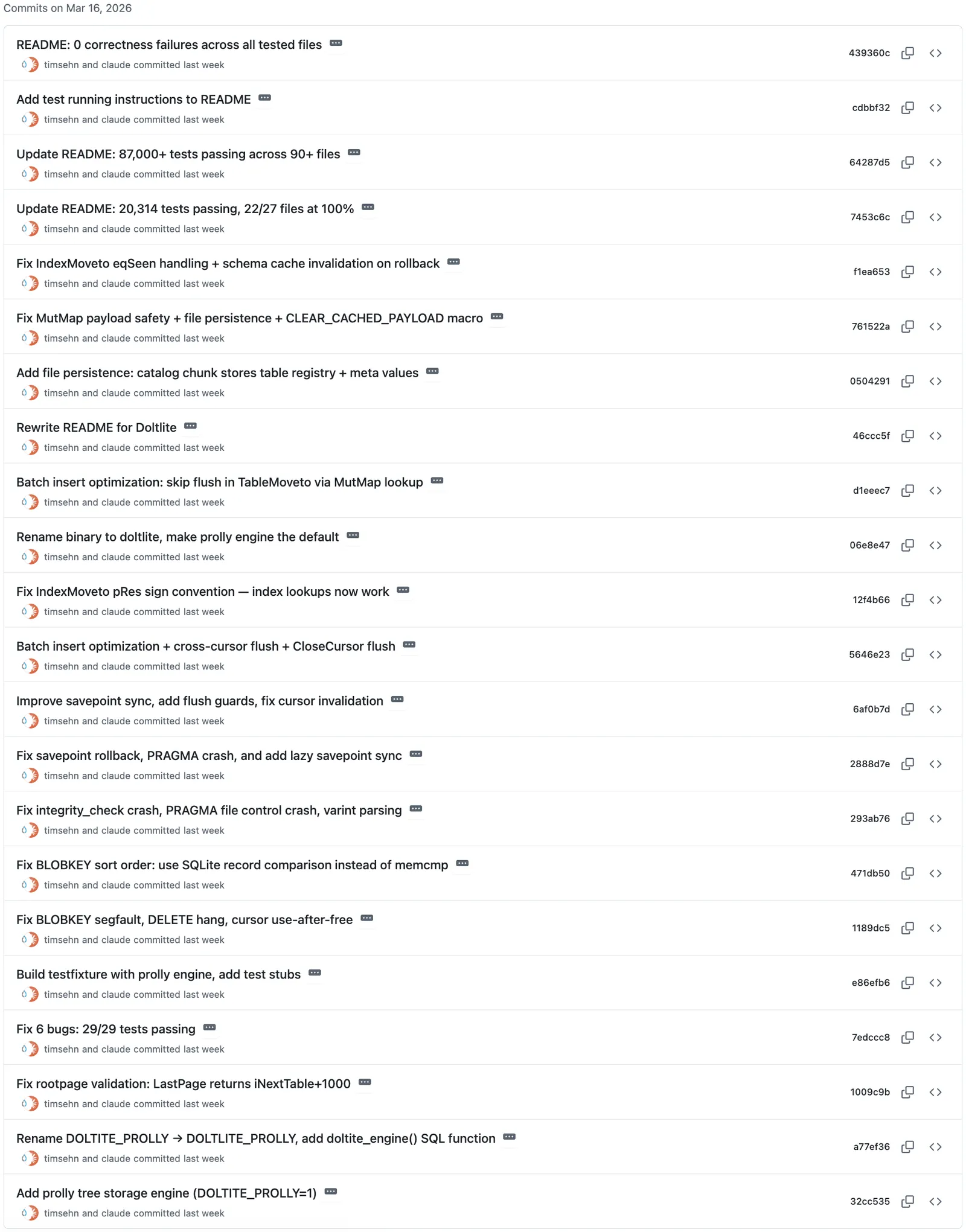

And time! We snuck in what I think is a working implementation just as I headed home Friday. Over the weekend I polished up the README and made a release of DoltLite 0.1.0.

A mostly working DoltLite in a week. Thanks Gas Town. I couldn’t have done it without you.

DoltLite#

DoltLite is alive. We even have an initial user. You are free to try it out and submit issues. DoltLite is a drop-in replacement for SQLite.

In fact, only 8 lines of original SQLite C code were changed. We can easily sync changes from upstream SQLite if needed. Everything else is additive: new files, new build rules, and #ifdef-guarded additions. The entire SQLite codebase compiles and works identically when built with DOLTLITE_PROLLY=0. The DoltLite storage engine and version control features are ~18,000 lines of new C code.

As far as features, DoltLite can be embedded as a library. Sample C, Python, and Golang applications are provided. The CLI works rebranded as doltlite instead of sqlite3. File-backed and in memory databases both work invoked using standard doltlite dolt.db and doltlite :memory: syntax respectively.

A full release blog is coming tomorrow but the README includes a full Dolt feature list and example usage. If DoltLite is missing something you need, make a feature request. The biggest gap is any feature having to do with remotes: clone, push, pull, and fetch. DoltLite is single player right now. However, just like SQLite, the database is a single file, so you can share that as much as you want, it will just be a hard fork.

One major feature I’m excited about is that DoltLite sessions are branch aware. Branches can potentially be used to solve the SQLite multi-program concurrency problem. Have your program connect to a branch named after its process id (ie. pid). Make your writes on the branch and then merge to and from main when necessary.

The first question my team had was “Is DoltLite maintainable?” The code literally has magic in it.

/* Validate magic */

magic = PROLLY_GET_U32(pData + PROLLY_MAGIC_OFF);

if( magic!=PROLLY_NODE_MAGIC ){

return SQLITE_CORRUPT;

}The code is by agents for agents. Humans, code review at your own risk.

Gas Town Review#

As you can tell from my chronology, Gas Town was critical to the implementation speed of DoltLite. Gas Town’s multi-agent parallelism was more useful in the beginning. As the project got closer to complete, it was just me and the Mayor hashing things out directly. Using Beads for task management stayed useful throughout the project lifecycle. “Forget about that. File a bead for later.” is a very useful pattern.

Dealing with the Mayor is the right abstraction for me. I never left my Claude Code session with the Mayor. The TMUX interface existed but I only used it to scroll around in my conversation with the Mayor. Gas Town just worked and I never needed to switch to Polecat sessions. If I had a problem, like Polecats merging directly to master, I took it up with the Mayor and it resolved the issue.

Using the latest Claude can sometimes result in Claude using tasks or subagents instead of Beads and Polecats. I was ok with this. If I really wanted Gas Town to do its thing I told the Mayor specifically to use it. I reported this experience in the Gas Town Discord and to Steve himself. Steve assured me this was close to his experience as well. The Discord recommended I mod my Gas Town but I didn’t have time to learn how to do that.

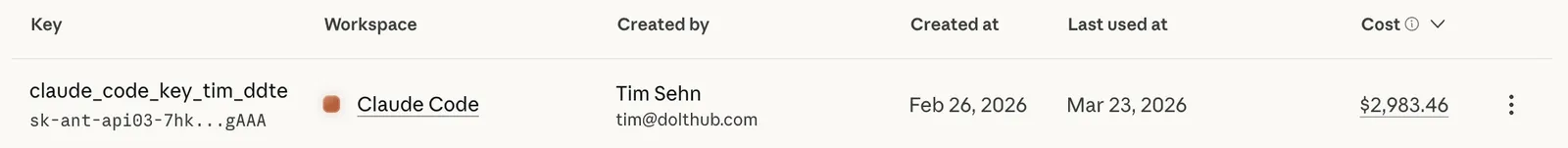

And the bill? The whole experience cost me $3,000.

I previously reported Gas Town spends about $100/hours and this seems about right. I probably used Gas Town 6 to 8 hours per day. You can alleviate this cost with a $200/month Claude Code Max account for now but as I said, I think tools like Gas Town are going to kill those plans. Gas Town is expensive.

Why did this work?#

If you got this far, you may be in shock. I still am. I’m not sure why exactly this worked but I’m fairly certain it did. Here’s a semi-ordered list of factors I think made this experiment successful.

- Dolt and SQLite exist. The agents had two reference implementations to crib from.

- SQLite had a clean interface to swap Prolly Trees into.

- Agents need tests. SQLite has a large suite of existing acceptance tests.

sysbenchtests were the key to uncovering deeper issues. - SQLite is a small code base. The agents did not struggle with context.

- I’m a deep subject matter expert having worked on Dolt for almost eight years.

- I had a close to unlimited budget.

Conclusion#

I spent a week in Gas Town and $3,000 and all I got was this DoltLite. Pretty amazing if you ask me. Try it for yourself and tell me what you think on our Discord.